Guidelines for Reviewers

|

Proposal Review Table of Contents |

Overview

As an ALMA proposal reviewer, you play a key role in ensuring a fair, competitive, and transparent evaluation process. Your rankings and written reviews help select the most compelling proposals and provide valuable guidance to proposers and fellow reviewers.

Peer review is vital but challenging. It requires careful analysis, clear communication, and awareness of potential biases. To ensure fairness, evaluate each proposal based on its merits while minimizing any biases that could unintentionally influence your judgment.

To ensure your reviews are thorough and effective, follow these recommended steps:

Preparation

- Allocate sufficient time: Set aside enough time to thoroughly review the proposals. This includes reading each proposal, drafting your reviews, finalizing your rankings, and re-reading your reviews for clarity and accuracy. For distributed peer review, expect to spend 2-3 days reviewing a Proposal Set (i.e., 10 proposals).

- Mitigate unconscious bias: Unconscious bias can unintentionally influence your evaluations. Recognizing and minimizing this bias is important to ensure fairness. For more information, refer to the section on Unconscious Bias in the Review Process.

- Understand the review criteria: Familiarize yourself with the review criteria for evaluating proposals (see Review Criteria).

- Familiarize yourself with key policies. Please read the Code of Conduct and Confidentiality, policy on Generative Artificial Intelligence use, Criteria for Conflicts of Interest, and Role of Mentors for non-PhD Reviewers to ensure a fair and ethical review process.

Review proposals

- Read the proposals thoroughly: Review the entire proposal, including the abstract, scientific justification, and technical justification.

- Write constructive and clear reviews: Provide specific and actionable feedback. Highlight strengths and weaknesses clearly and offer suggestions for improvement where appropriate. See How to write a useful proposal review for more tips on writing informative reviews.

Rank proposals

- Rank proposals against the review criteria: Rank proposals according to the established criteria, ensuring that your rankings align with the balance of strengths and weaknesses in your review.

Learn from other reviewers

- Learn from other reviewers in Stage 2: Stage 2 offers the opportunity to access the comments from the other reviewers for your assigned proposals, providing valuable insights that can help refine your rankings and reviews. For details on the Stage 2 process for distributed peer review, see here.

The rest of this guide provides additional tips and outlines the relevant policies to help you conduct a thorough and insightful review.

Code of Conduct and Confidentiality

All participants in the review process are expected to behave in an ethical manner.

- Reviewers will judge proposals solely on their scientific merit.

- Reviewers will be mindful of bias in all contexts.

- Reviewers will declare all major conflicts of interest.

- Reviewers will write constructive reviews and avoid any inappropriate language.

- Reviewers will not use generative Artificial Intelligence (GAI) to evaluate or rank proposals (see Generative Artificial Intelligence policy).

All proposal materials related to the review process are strictly confidential.

- The assigned proposals should not be distributed or used in any manner not directly related to the review process.

- Any data, intellectual property, and non-public information shown in the proposals may be used only for the purpose of carrying out the requested proposal review.

- The assigned proposals and the reviews may not be discussed with anyone other than the Proposal Handling Team, or the assigned mentor when applicable.

- Following the GAI policy, reviewers and mentors will not input any part of the assigned proposals into GAI or machine learning tools.

- All electronic and paper copies of the proposal materials must be destroyed as soon as a reviewer completes the proposal review process.

If a reviewer fails to uphold ethical standards or does not complete their reviews in good faith, the proposal(s) for which they are the designated reviewer may be disqualified.

Conflicts of Interest

The goal of the review assignments is to provide informed and unbiased assessments of the proposals. A major conflict of interest occurs when a reviewer’s personal or work interests would benefit if the proposal under review is accepted or rejected.

To support the identification of conflicts of interest, reviewers may provide a list of investigators with whom they have a major conflict of interest. If submitted, this list will be used to ensure that reviewers are not assigned any proposal in which the PI, co-PI, or co-I appear on their list. The list must be submitted by 28 April 2026, 15:00 UTC to be considered during the proposal assignment process.

APRC members and External Science Assessors reviewing Large Programs must complete their conflict-of-interest list by the stated deadline so that conflicts can be properly accounted for the assignment process.

How to set up the list of conflicts of interest

- Reviewers can set their conflicts of interest list through their user preferences on the ALMA Science Portal searching for names in the ALMA user's database.

- Only registered ALMA users need to be included in the list because all proposers, and therefore all reviewers, must be registered.

Who to include in the conflicts-of-interest list

- Close collaborators. Defined as a substantial collaboration on three or more papers within the past three years or an active, substantial collaboration on a current project.

Note: Membership in a large project team on its own does not constitute a conflict of interest

- Students and postdocs under supervision of the reviewer within the past three years.

- A reviewer’s supervisor (for student and postdoc reviewers).

- Close personal ties (e.g., family member, partner) that are ALMA users.

- Other reasons. Any other circumstances where the reviewer believes a major conflict exists.

Identification of conflicts during proposal assignment Before assigning proposals, the PHT will identify major conflicts of interest based on the following criteria:

Declaring additional conflicts of interest

When reviewers receive their proposal assignments, they may identify additional conflicts of interest that were not identified by the above checks. Potential conflicts of interest at this stage include:

- The reviewer is proposing to observe the same object(s) with similar science objectives as the proposal under review.

- The reviewer had provided significant advice to the proposal team on the proposal even though they are not listed as investigators.

- Any other reasons that the reviewer believes there is a strong conflict of interest.

Reviewers should inform the PHT of any major conflict of interest in their assignments by rejecting the proposal assignment and indicating why they believe a major conflict of interest exists. This is done through the Reviewer Tool (for distributed peer review) or the Assessor Tool (for the APRC). The PHT will evaluate the reported conflict(s). In distributed peer review, if the conflict of interest is approved, the reviewer will be assigned a new proposal.

Student reviewers participating in distributed peer review must declare any conflict for both themselves and their mentor. Since mentors have read-only access to their assigned proposals, students are responsible for declaring all applicable conflicts. Student reviewers should consult with their mentor to ensure that conflicts are identified accurately.

What is not a conflict of interest

- Suspecting the identity of the proposal team

- Lack of expertise with a given proposal.

Unconscious Bias in the Review Process

Bias in the review process occurs when a reviewer unknowingly favors or disfavors a proposal for reasons unrelated to its scientific merit. These biases are shaped by culture and personal experiences, making unconscious bias a challenge for all reviewers. Common examples include biases based on culture, age, prestige, language, gender, and institutional affiliation.

As an ALMA reviewer, recognizing and addressing unconscious bias is essential for ensuring a fair and objective review process. Cognitive biases—such as anchoring (overemphasizing initial impressions), confirmation bias (seeking evidence to confirm preexisting beliefs), and the halo effect (allowing a researcher’s reputation to influence evaluations)—can unintentionally undermine the fairness and quality of assessments.

To help reduce these influences, ALMA has adopted a dual-anonymous review system. You can also take the following steps to further mitigate bias:

- Allow Sufficient Time: Avoid rushing through your reviews, as bias tends to increase under time pressure. Allocate adequate time to evaluate each proposal carefully and critically reflect on your decisions to ensure your feedback is balanced and impartial. Ask yourself questions like “Why do I think this?” or “Is my judgment supported by information provided in the proposal?”.

- Use a Structured Approach: Apply the review criteria consistently across all proposals. Take notes and base your feedback on specific examples from the proposal.

- Actively Reflect: Be mindful of how initial impressions or assumptions may influence your evaluation. If you have a strong opinion about a proposal, challenge yourself to consider alternative perspectives. Once you have finalized your reviews, ask yourself:

- Have I distinguished personal preferences from objective criteria?

- Am I reacting to style over the scientific content?

- Is my feedback based on evidence presented in the proposal?

- Justify your assessments: Support your evaluations with specific examples from the proposal and relevant literature references where appropriate.

- Follow dual-anonymous guidelines: Avoid speculating about the identity or affiliation of the proposers.

By actively checking for unconscious biases and implementing these strategies, you can help ensure the integrity, fairness, and quality of the ALMA review process.

Policy on Use of Generative Artificial Intelligence

ALMA has adopted a policy on the acceptable use of Generative Artificial Intelligence (GAI) in preparing and reviewing ALMA proposals. The policy balances the benefits of GAI with the need to preserve human judgment, scientific expertise, and confidentiality. Reviewers are strongly encouraged to read the full policy available in Appendix C of the ALMA User’s Policies. Below is a summary of the key points relevant to proposal reviewers and mentors:

- GAI tools must not be used to recommend ranks, evaluate proposal strengths and weaknesses, or perform any other evaluation task.

- Reviewers and mentors are strictly prohibited from entering any content from assigned proposals into GAI or machine learning tools, as this breaches the confidentiality of the review process.

- Reviewers may only use GAI tools to correct grammar or improve readability of the reviews. If GAI tools are used, reviewers need to consider that:

- Sensitive information related to proposals must be removed before using GAI tools. No proposal-specific or confidential information may be input into these tools.

- Reviewers are fully responsible for ensuring that any output generated by GAI tools is accurate and complies with ALMA’s guidelines.

Reviewers and mentors must adhere to this policy. Failure to comply may result in the disqualification of the reviewer’s own proposal.

Role of the Mentor for non-PhD reviewers

Reviewers participating in the distributed review process who do not have a PhD are required to have a mentor who will assist with the proposal review. The mentor is specified in the ALMA Observing Tool (OT) when preparing the proposal; they are not required to be a member of the proposal team. In general, the mentor provides guidance to the student throughout the review process. They have access to the proposals and the reviews of their mentees through the Reviewer Tool in read-only mode.

Specific roles of a mentor include:

- Work with the reviewer to declare any conflicts of interest on the assigned proposals. The conflicts of interest criteria apply to both the reviewer and the mentor.

- Provide advice to the reviewer as needed on the scientific assessment of the proposals.

- Provide guidance to the reviewer on providing constructive feedback to the PIs.

- Review the comments to the PI before they are submitted.

Review Criteria

Each proposal contains the following sections, which reviewers are required to read:

- Abstract,

- Scientific Justification,

- Technical Justification.

- Note: The Technical Justification typically provides a detailed justification of the requested sensitivity, angular resolution, and correlator setup, which is important for evaluating the proposal.

Criteria Applicable to All Proposals

- Scientific Merit

Assess the overall scientific merit of the proposed investigation and its potential contribution to the advancement of scientific knowledge based on the following questions:

- Does the proposal clearly indicate which important, outstanding questions will be addressed?

- Will the proposed observations have a high scientific impact on this particular field and address the specific science goals of the proposal?

Note: ALMA encourages reviewers to consider well-designed high-risk/high-impact proposals even if there is no guarantee of a positive outcome or detection.

- Does the proposal clearly describe how the data will be analyzed in order to achieve the science goals?

- Suitability of Observations

Evaluate the suitability of the observations to achieve the scientific goals considering the following questions:

- Is the choice of target (or targets) clearly described and well justified?

- Are the requested signal-to-noise ratio, angular resolution, largest angular scale, and spectral setup sufficient to achieve the science goals well justified?

- Does the proposal justify why new observations are needed to achieve the science goals?

- For Joint Proposals (see the Proposer’s Guide), does the proposal clearly describe why observations from multiple observatories are required to achieve the science goals?

Important: Base your assessment solely on the content of the proposal according to the above criteria. Proposals may contain references to published papers (including preprints) as per standard practice in scientific literature. Consultation of those references should not, however, be required for a general understanding of the proposal.

Additional Criteria for Large Programs

For Large Programs, in addition to the review criteria listed above, reviewers should also consider the following factors:

- Scientific impact

- Does the Large Program address a strategic scientific issue and have the potential to lead to a major advance or breakthrough in the field that cannot be achieved by combining regular proposals?

- Value of data products

- Are the data products that will be delivered by the proposal team appropriate given the scope of the proposal?

- Will these products be valuable and beneficial to the broader scientific community?

- Publication plan

- Is the proposed publication plan appropriate for the scope and goals of the project?

- Team organization and resources

- Is the organization of the team and available computing resources sufficient to complete the project in a timely fashion?

- Team expertise

The APRC will evaluate the team expertise statements for Large Programs to assess if the proposal team is prepared to complete the project in a timely fashion.

The team expertise statements will be evaluated only after the APRC has completed the scientific rankings of the Large Programs. The evaluation of the team expertise statements will not be used to modify the scientific rankings. Any concerns that the APRC has about the team expertise of a Large Program will be communicated to the ALMA Director, who will make the final decision on whether to accept the proposal.

- Technical and scheduling feasibility

JAO will assess the technical feasibility and scheduling feasibility of the Large Programs and report the results to the APRC.

Notes on Review Criteria Applicable to All Proposals

- Technical Feasibility

- The OT validates most technical aspects of the proposal; e.g., the OT verifies that the angular resolution can be achieved, verifies the correlator setup is feasible, and provides an accurate estimate of the integration time needed to achieve the requested sensitivity. Reviewers should assume that the OT technical validation of the proposal is correct.

- Reviewers should consider in their evaluation if the requested signal-to-noise ratio, angular resolution, largest angular scale, and spectral setup as requested by the PI are sufficient to achieve the scientific goals of the proposal and are well justified.

- Reviewers should not downgrade a proposal based solely on the requested observation time.

- Scheduling Feasibility

- Reviewers should not consider the scheduling feasibility in their rankings. JAO will assess this separately when building the observing queue and forward this information to the PI when needed.

- Dual-anonymous

- If you suspect anonymity guidelines have been violated, notify the PHT using the "Comment to JAO" box in the Reviewer Tool while continuing to evaluate the proposal based on scientific merit.

- Do not mention anonymity concerns in your comments to the PI. The PHT will address any violations separately.

- See the dual-anonymous guidelines for reviewers for complete guidance.

- Resubmissions and Joint Proposals

- Resubmissions: Reviewers should evaluate a resubmission of a previously accepted proposal as they would any other proposal according to the review criteria. If the proposal is accepted, any science goals which have already been observed will be descoped.

- Joint Proposals: Joint proposals should be evaluated as any other proposal, following the review criteria stated above.

Reviewer Support

Support is available to reviewers throughout the review process. To ensure that questions and issues are handled effectively and in a timely manner, reviewers are encouraged to raise their concerns as early as possible and to follow the recommendations below.

Submit a ticket to the “Proposal Review Support” department in the ALMA Helpdesk for any issue that requires prompt attention from the PHT, particularly when timely resolution is needed to complete reviews. Examples include questions about a proposal, potential conflicts of interest, or problems with the Reviewer Tool.

Use the “Comment to the JAO” field in the Reviewer Tool to report non-urgent matters, such as potential violations of the dual-anonymous guidelines or PDF format requirements, or other proposal-specific notes that do not require immediate action.

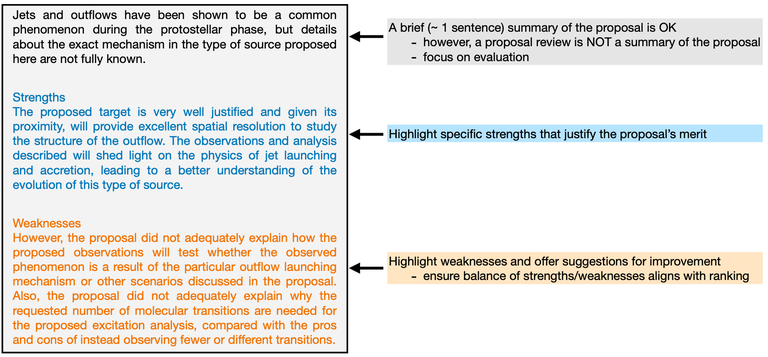

How to write a useful proposal review

Clear and constructive reviews are crucial to maintaining a fair and transparent evaluation process. They help proposers refine future submissions and provide valuable input to other reviewers in the Stage 2 process. Reviews must be written in English.

Guidelines for writing effective reviews

- Highlight strengths and weaknesses

- A review should not simply summarize the proposal. While a brief overview is fine, the majority of the review should focus on the strengths and weaknesses.

- Clearly outline both strengths and weaknesses. This allows the PI to understand what aspects of the project are strong, and where improvements are needed for future proposals.

- o Focus on major strengths and major weaknesses. Even when a proposal is well executed and scientifically sound, it may rank lower due to limited scientific impact, urgency, or compelling nature. In these cases, acknowledge the proposal's merits while clearly explaining what prevents it from ranking higher.

- Ensure that the balance of the strengths and weaknesses aligns with your ranking of the proposal.

- Be objective and specific

- Offer specific, constructive feedback on how the proposal could be improved.

- Support your statements with references or examples from the proposal.

- Avoid vague statements that could apply to any proposal, or generic reviews where only minimal details are changed between proposals.

- Be professional and constructive

- Avoid inflammatory or inappropriate language, even if you think the proposal could be significantly improved.

- Do not use sarcasm or any insulting language.

- Be concise

- The quality of a review is more important than its length. Effective reviews are focused, clear and informative. However, reviews that are overly brief often lack sufficient detail to be useful. Typical reviews are about 6-8 sentences.

- Be aware of unconscious bias

- We all have biases and we need to make special efforts to review the proposals objectively. We encourage reviewers to be mindful of unconscious bias during the review process. For more information, see Mitigating Unconscious bias.

- Other best practices

- Use complete sentences when writing your reviews. We understand that many reviewers are not native English speakers, but aim for correct grammar, spelling, and punctuation.

- Do not include comments about scheduling feasibility. ALMA will assess the scheduling feasibility when building the observing queue and forward this information to the PI when needed.

- Do not include remarks about possible dual anonymous violations in the review. Simply note the possible violation in the “Comments to JAO”.

- Do not include explicit references to other proposals that you are reviewing, such as project codes.

- Do not ask questions in your review. A question is usually an indirect way to indicate there is a weakness in the proposal, but the weakness should be stated directly and clearly.

- Critique the proposal and not the proposal team.For example, instead of writing "The PI did not ….", use "The proposal did not ...”.

- Review your comments before submitting

- After completing your review, read your comments from the proposer’s perspective. Revise any feedback that may sound overly harsh, unclear, or ambiguous to ensure it is constructive, respectful, and professional.

- Verify that the strengths and weaknesses discussed in your comments are consistent with the ranking you assigned to the proposal. If inconsistencies are found, adjust either the comments or the ranking as appropriate.

- Before submitting, ensure that your review is clear, well supported by proposal-specific examples, and provides actionable feedback.

Quick Guide to a Helpful Review

The table below summarizes the key characteristics of high-quality reviews and the most common limitations of adequate and low-quality reviews. This quick guide serves as a practical tool to help reviewers prepare clear, consistent, and constructive comments for PIs. Additionally, it provides examples to illustrate the different levels of review quality.

| High Quality | Adequate | Low Quality | |

| Criteria |

The review provides clear, specific, actionable, adn constructive feedback that effectively identifies the proposal's strengths and weaknesses

|

The review offers some useful insights but lacks the detail, clarity, or specificity needed to be fully effective.

|

The review fails to provide meaningful feedback, contains significant errors, or adopts an unprofessional tone.

|

|

Examples |

Clearly identifies a strength, explains why the target is well motivated, and uses specific details from the proposal.

Clearly identifies a weakness and explains its scientific relevance while maintaining a professional tone.

|

Identifies a strength but lacks explanation of why proximity matters scientifically.

Points out an issue but does not explain the implications or how to address it.

Highlights an issue but does not explain why this matters for the proposal's goals. |

Vague praise that provides no insight into strengths.

Does not specify which aspects are unclear.

Focuses on trivial issues.

Dismissive tone with no supporting justification.

The comment is overly general with no specifics on the proposals or constructive feedback. |

Review Quality Assurance

High-quality reviews are essential to a fair and effective review process. The PHT monitors review quality through spot checks and automated flagging systems. When problematic reviews are identified, the PHT will contact reviewers to request revisions.

All reviewers are expected to meet the quality standards outlined in this guide.

- Common reasons for flagging a review for revision

- Primarily summarizes the proposal without evaluating its strengths and weaknesses

- Contains generic or vague feedback (see Quick Guide examples)

- Shows a misalignment between written comments and assigned ranking

- Reuses identical review text across multiple proposals with only minor changes (e.g., target names)

- Uses unprofessional language (sarcastic, dismissive, or harsh tone)

- Exhibits other characteristics of Low Quality reviews, as defined in the Quick Guide above

- Revision Process

- If a review is flagged for revision, reviewers will receive an email from the PHT identifying the review(s) that require revision and explaining the specific issues. Revised reviews must be submitted through the Reviewer Tool by the deadline specified in the notification.

Example review

Here is an example review that conforms with the above guidelines.

Your Feedback Matters

ALMA is committed to the continuous improvement of the review process. Feedback from reviewers on these guidelines, the review tools, or the overall process is welcome and can be submitted through the ALMA Helpdesk or the reviewer survey available in the Reviewer Tool.

Return to the main ALMA Proposal Review page